NVIDIA Unveils Nemotron-CC: A Trillion-Token Dataset for Enhanced LLM Training

You may also like

Electric Capital: Tracking 501 types of yield-generating RWA assets, we discovered these patterns

Those who are cut off by AI will not disappear; they will become the creators of the next round of the economy

Stablecoins reshaping cross-border payments in Asia? Strategic panorama and investment opportunity analysis

Zuckerberg is building an AI agent to help him as CEO

Bloomberg: Swiss Private Bank Old Guard Rifts, Is Bitcoin the Spark?

Zuckerberg is building an AI assistant to help him be CEO

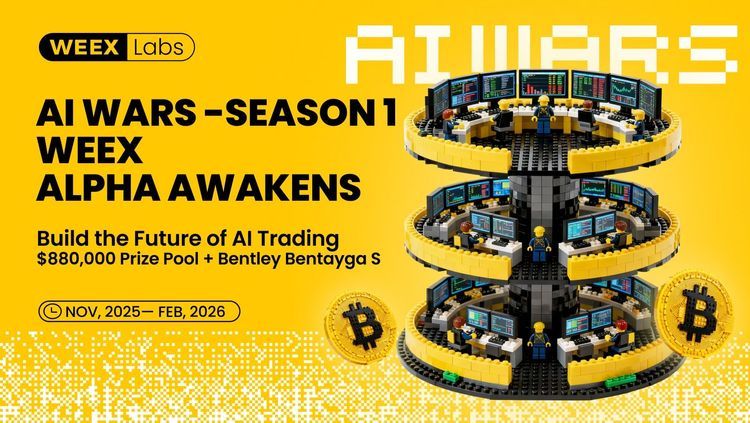

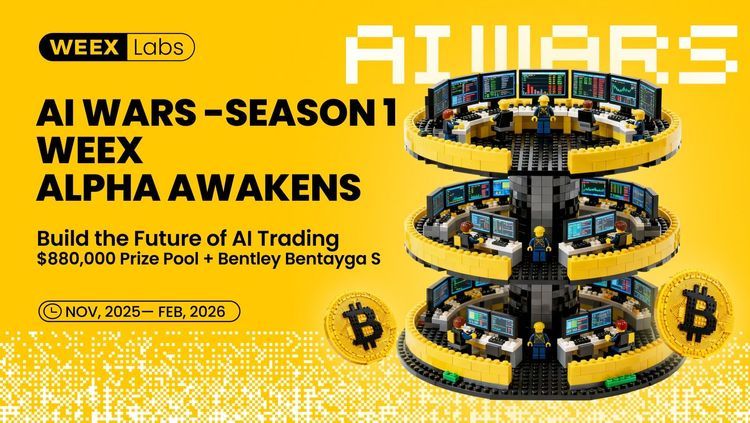

Join WEEX AI Wars II: How WEEX API, Trader Skills Empower AI Trading Innovations

Join WEEX AI Wars II and be part of a global AI trading revolution. Compete with top AI agents and bots, showcase your strategies, and leverage WEEX API and Trader Skill to innovate, automate, and gain exposure in the AI trading ecosystem. Onboard your AI agent to WEEX AI Wars II NOW.

What kind of scenario will Backpack's TGE today play out in the bear market opening?

Polymarket Ten Million Dollar Winner Retrospective: 40 Addresses, 100,000 Transactions, Only Three Ways to Make Money

The Most Brutal Single-Month Plunge in 43 Years, Gold's Every Top Looks Like This

Jiang Xueqin's Latest Interview Transcript: How to View the Current Global Changes

Maximize Your USDT Yield: The Weex Auto Earn Strategy for Passive Crypto Income

Learn how to earn interest on USDT with WEEX Auto Earn. Discover how stablecoins can generate passive income and why some platforms now offer up to 300% APR.

1 million investment yields over 1 billion return, Airwallex co-founder Liu Yueting reviews key life investments

Polymarket Millionaire Review: 40 addresses, 100,000 transactions, only three ways to make money

Four Key Truths and Cost Traps Behind Polymarket LP Market Making Incentives

San Francisco Stablecoin Weekly Insights: The XYZ Coordinate System of 2026

Asia's Next Great Dog Coin Debuts at the Weex AI Trading Hackathon

A BNB Chain meme token inspired by the Shih Tzu dog, blending community culture, creativity, and long‑term loyalty in Web3.

Fluxor: Connecting Global Builders With the WEEX AI Trading Hackathon

A hackathon platform connecting builders and creators to collaborative opportunities and innovation in Web3, enabling AI-oriented developers to experiment and create at scale.

The growth of AI-driven tools and community collaboration in Web3 has created new opportunities for developers worldwide. As a community partner and sponsor of the WEEX AI Trading Hackathon, Fluxor's mission to unify hackathon experiences and foster creative partnerships aligns with this spirit of collective innovation.